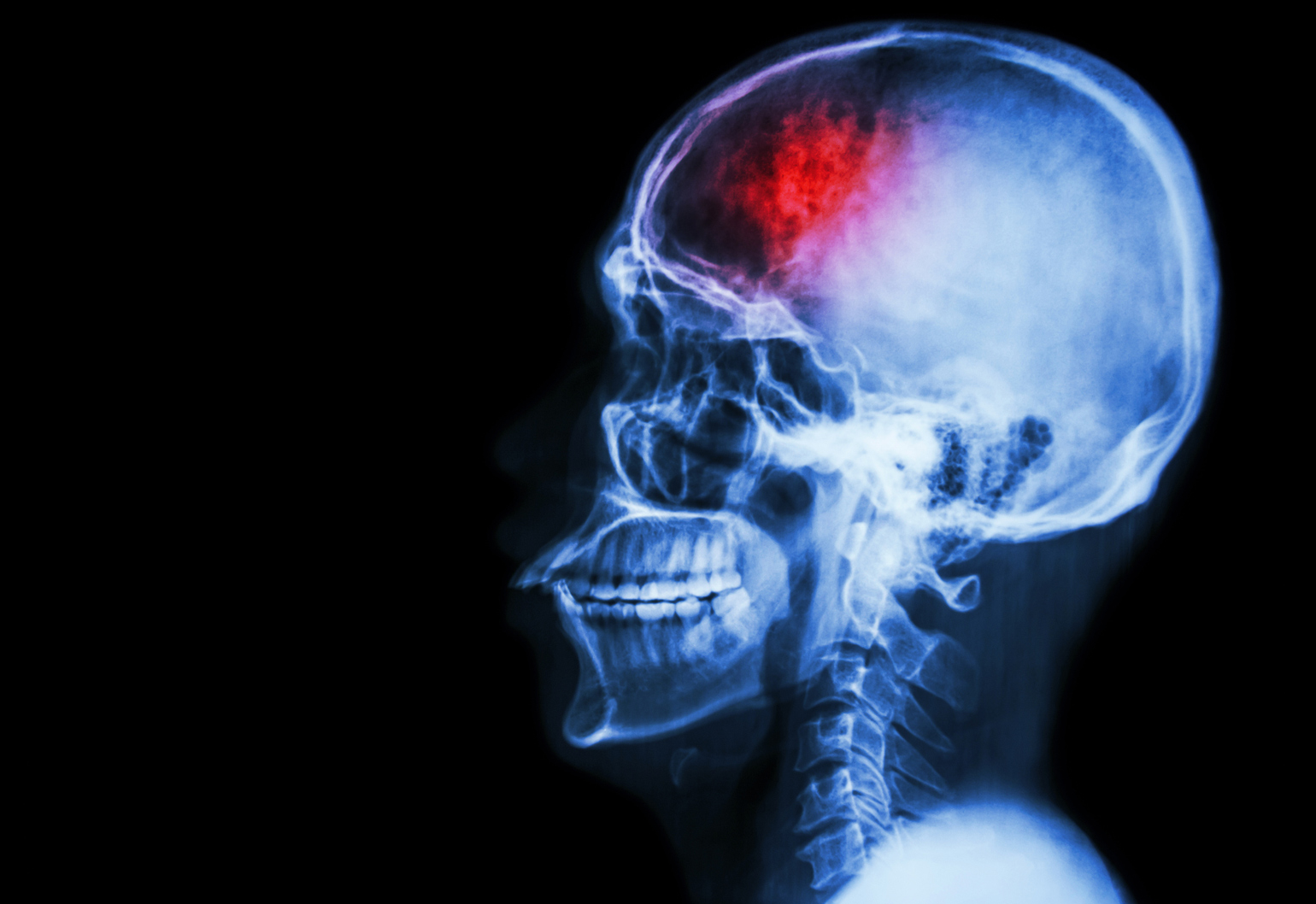

Strokes are the third leading cause of death in Australia, with one stroke occurring every nine minutes. But University of Sydney biomedical engineering students are hoping to change that with a rapid stroke prediction system that uses computer vision and AI.

Working with Associate Professor Noel Young at Westmead Clinical School, a team of students won last year’s MedTech Innovation Competition for their ViSP System – Visual Stroke Prediction System – which uses both computer vision and artificial intelligence to create a potential risk factor score for a patient within six hours from the onset of symptoms, and can be used by any hospital with a CT imaging machine.

“The focus of the system is on identifying the risk of either ischemic strokes or haemorrhagic strokes,” said Joshua Riley, a student on the winning team.

“By analysing tissue density and blood flow through computer vision on the 4D scans, we compare this with previous data and clinical knowledge to generate the risk score.”

CT perfusion scans of the patient are uploaded into ViSP and analysed. Clinicians are provided with a report that details the results, which they can then use along with their own knowledge to formulate a decision for each patient.

One of the biggest challenges the team faced during the system’s development was acquiring clinical data for testing, which it overcame with the creation of a phantom brain made out of silicon.

“The phantom brain was constructed using silicone around a 3D-printed model of the middle cerebral artery. Once it was set, we dissolved the printed plastic and we were left with the vascular structure as tunnels in the silicon,” Riley said.

“This allowed us to simulate blood flow through a particular region in the brain.”

Riley first became interested in biomedical engineering when he was trying to figure out what Year 12 subjects to take – he enjoyed mathematics, biology and design and technology, and found biomedical engineering to be a great crossover of the three.

“After multiple Google searches and watching individuals use advanced bionic prosthetics on YouTube, I knew this was what I’d like to study,” he said.

Deep learning

Machine learning and AI are being more widely used in medicine, with major companies such as Google, IBM and Microsoft all making inroads in the area. Google recently announced the open source release of its DeepVariant technology, which it says is “a deep learning technology to reconstruct the true genome sequence from HTS sequencer data with significantly greater accuracy than previous classical methods”.

Meanwhile, IBM’s Watson for Genomics was recently able to cut the time to accurately interpret genomic data for humans from 160 hours to 10 minutes via the supercomputer in a proof-of-concept study.

Riley believes this integration of AI and machine learning into healthcare will become even more common and allow smaller hospitals to perform procedures that patients might have needed to travel for previously.