Many experts have concerns about the use of AI on the battlefield, but Engineers Australia member and Chartered engineer Stephen Bornstein CPEng believes the technology could be used to prevent harm.

In 2015, more than 1000 experts in artificial intelligence signed an open letter warning of the dangers of autonomous military technology.

With Steve Wozniak, Stephen Hawking and Elon Musk included among their number, the letter’s signatories said they were concerned that AI weapons technology had “reached a point where the deployment of such systems is — practically if not legally — feasible within years, not decades, and the stakes are high: autonomous weapons have been described as the third revolution in warfare, after gunpowder and nuclear arms”.

They were not the first to be concerned about the ethical implications of bringing artificial intelligence to the battlefield. In 2012, 93 NGOs launched the Campaign to Stop Killer Robots, and, in recent years, the United Nations has, unsuccessfully, sought to ban “lethal autonomous weapons systems” under its Convention on Certain Conventional Weapons. (Australia has been a stumbling block in the efforts, resisting a ban alongside the US, the UK, Russia, Israel and South Korea.)

Ethics are at the forefront of the work Bornstein does, which helps to distinguish the contributions his company, Cyborg Dynamics, makes in the military realm.

In fact, his Athena AI technology uses artificial intelligence to prevent — not cause — casualties in war.

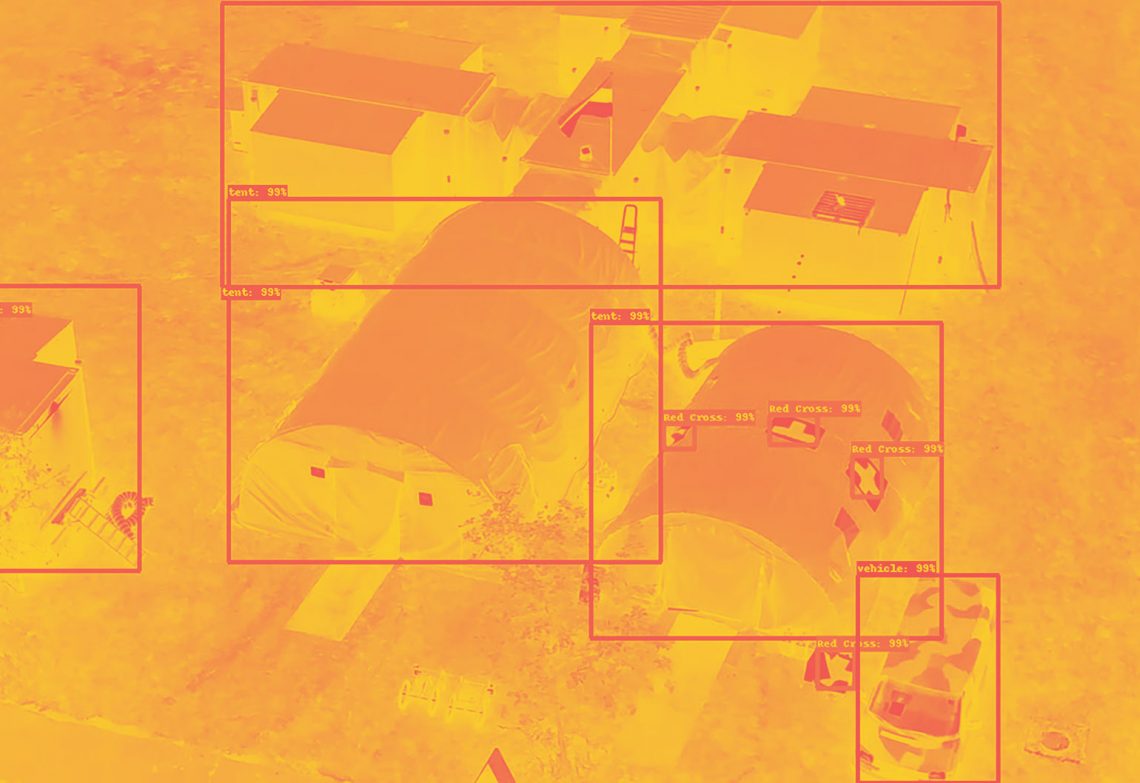

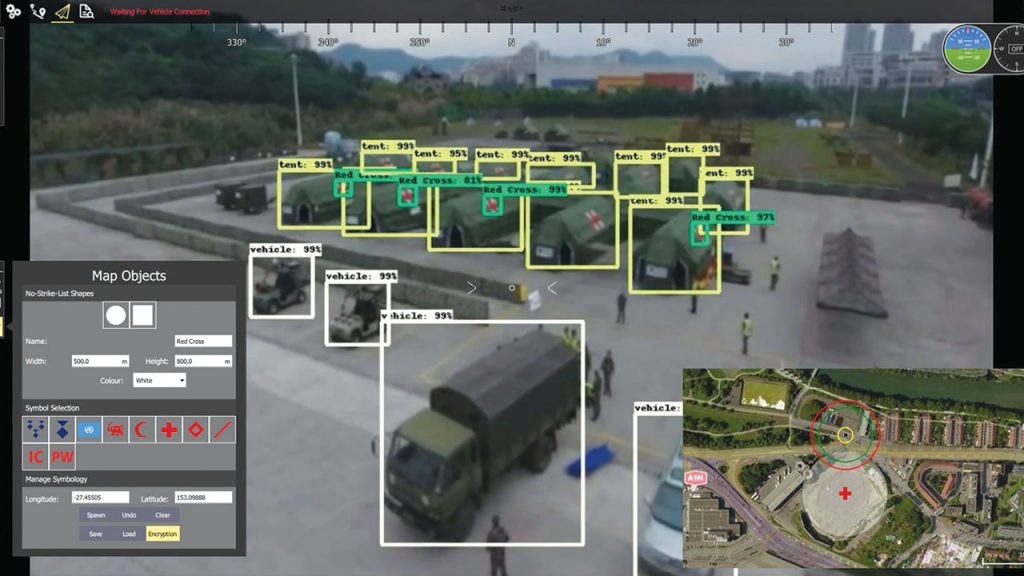

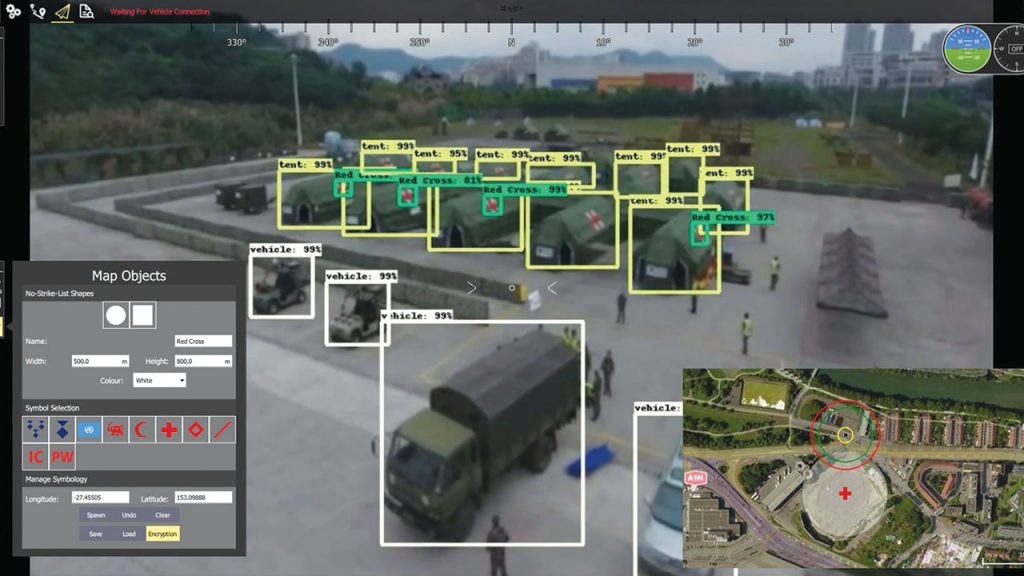

Athena AI is an artificial intelligence system that identifies and classifies objects and locations on a battlefield and communicates to the soldier using it which ones must not be targeted for legal or humanitarian reasons. This encompasses people such as enemy troops who have surrendered and civilians, as well as locations such as hospitals or other protected sites.

“Ethics is 100 per cent at the heart of what we’re doing,” Bornstein told create.

“We have a legal and ethical framework that got written and developed with the best ethicists and moral philosophy in the world, [and] which was developed before we coded any algorithms for the project.”

Integrating these ethical concerns into the technology — and ensuring the product would meet the requirements of the Geneva Convention’s Article 36, which regulates new weapons — meant asking some tricky questions.

“If we want this system to actually sit [with] someone who can use this in combat, what does it need to do?,” Bornstein said.

“What does it legally have to do? And, then what, ethically, do we want it to do?”

Do the right thing

Ethical considerations in combat are not theoretical for Bornstein. He recalls being passionate about defence engineering since childhood and began working in the industry in 2011, after studying aerospace engineering.

He has also been an officer in the Australian Army Reserves since 2013.

“I had thoughts about conflict experience. I knew at a minimal level that [ethics] are very important,” he said.

“And, then the Trusted Autonomous Systems Defence Cooperative Research Centre [TASDCRC] had a parallel research program led by UNSW where they had an emphasis on looking at certain ethics problems associated with AI. Trusted Autonomous Systems have a significant research program underway where they had an emphasis on both ethics and law associated with AI.

“This is currently being refocused as an ethics uplift program and guided by a recent Defence report, A Method for Ethical AI in Defence. And they were interested in really good use cases that they can actually apply their ethics theory to products.”

Bornstein said the CRC and his team at Cyborg Dynamics identified together that there was a need to use emerging artificial intelligence to improve decision support for military operations.

“We identified that there were a large number of casualties that were from friendly fire in conflict,” he said.

“There were a number of individual cases where there were large amounts of collateral damage in both Afghanistan and Iraq. There was a fuel tanker incident in Afghanistan where 200 civilians were killed. There was a case involving an AC-130 where 60 civilians were targeted by gunship at a hospital.”

This concept originated with a paper written by TASDCRC CEO Jason Scholz. After reading this, Bornstein hoped he could address common fears about artificial intelligence, while at the same time addressing problems in human decision-making.

“We understood based on conflict there were a lot of flaws with [human decision-making], and the consequences were really bad,” he said.

“We sort of said, ‘Well, how can we use autonomous vision systems to help a human take that tactical pause and actually look at the situation and have something else help them look at the situation, so they can make a better-informed decision?’”

Bornstein and his team had to develop specialised resources for computer-based vision systems.

“We had a small background using vision-based artificial intelligence for drones, but not in terms of how we can exploit that artificial intelligence to achieve a better outcome,” he said.

Battle ready

That meant training the system to recognise a range of complex situations and inputs.

“That’s a person. That’s a child. That person’s got a gun. That person’s got a Red Cross,” Bornstein demonstrated.

“We actually go through a variety of scenarios and we are consistently looking based on our data set and our use cases: what is the best network to run in a given situation? Sometimes it’s a hybrid of two different techniques, because they want the best of both worlds in some instances — speed and accuracy of the AI.”

And that introduces other ethical questions, such as how to responsibly use the data the system collects.

“How you can ensure that your data is very well validated and very well represented,” he explained. “And then, the third aspect is ensuring that you are using the best algorithms or techniques possible for the vision-based protection.”

Bringing all this together required a lot of talent.

“About five PhDs, and 10 years of development expertise have supported the project,” Bornstein recalled.

“There’s three things that are super important to our product. One is the accuracy of our vision-based AI — that we are at the edge with the best possible products. And, then once we run it through one network, we cross check it against another network as well. And, this all happens behind the scenes.

“You have to train the network with really good data that’s well-balanced and unbiased.”

Off the battlefield

Cyborg Dynamics applies its artificial intelligence prowess to a wide variety of problems.

Fuselage simulator

To boost community engagement, the Royal Flying Doctors Service wanted a better fuselage demonstrator that it could use as an educational tool. Cyborg Dynamics responded with a hands-on experience with flying simulated missions. In 2016, the upgraded simulator went to 200 schools across Victoria and Tasmania.

Anti-poaching

To combat wildlife poaching in Mozambique and South Africa, Cyborg Dynamics worked with the International Anti-Poaching Foundation to develop an unmanned aerial vehicle platform that could cover extensive areas more effectively than ground-based rangers. Beginning in 2015, the program intercepted poachers using techniques such as systems integration of flight control, auto pilot, computer vision algorithms for tracking and flight testing. Cyborg is now supporting the IAPF with static remote-sensing technologies.

Automated surveying

Align Robotics, a spin-out company from Cyborg Dynamics, uses an autonomous robotic solution for construction instead of the traditional string-and-chalk-lines approach.

Its AutoMARK and TerraMARK robots automate surveying and setting out.