Through his pioneering work integrating haptic technology into robots and virtual reality systems, Engineers Australia’s Professional Engineer of the Year Saeid Nahavandi is transforming how we engage with simulated experiences.

Imagine sitting in the cockpit of a fighter jet, your heart racing as you perform an intricate barrel roll, feeling the g-force as you make a complete rotation in the air.

Now imagine you’re doing this just seven metres off the ground.

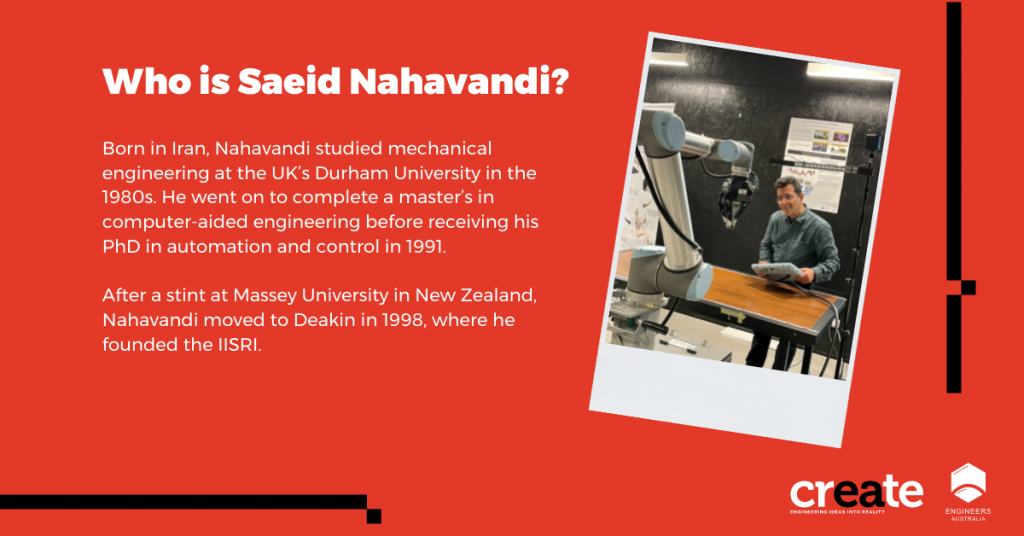

This feat is possible thanks to the Universal Motion Simulator (UMS) developed by Professor Saeid Nahavandi FIEAust CPEng and his team at Deakin University’s Institute for Intelligent Systems Research and Innovation (IISRI). A decade in the making, Deakin describes the UMS as “the first haptically enabled robot-based motion simulator in the world”.

Essentially a giant robot arm with a seat attached, the machine uses a series of haptic input devices combined with virtual reality (VR) software to provide a realistic sense of motion. It can simulate everything from the intricate manoeuvres of a fighter jet to a helicopter flight or the passage of an army vehicle. The technology has been commercialised into the Reconfigurable Driver Simulator (RDS). The Australian Defence Force was among the first customers, purchasing six RDS systems in 2019 that will be used to train drivers on Boxer combat reconnaissance vehicles.

“The journey from conceptualisation to production and commercialisation of the UMS was challenging,” Nahavandi said. “At first, people thought the system might be dangerous or that it was too risky to put a human at the end of a robot arm, especially because it has a payload of up to one tonne. “To give people confidence, I put myself in it first — I’m always the first guinea pig. And through repeated testing we have shown that with the right engineering, and the right tools and techniques, we can develop innovative, safe systems.”

The right touch

Nahavandi oversees a team of 100 researchers from a range of disciplines, including mechanical, electrical, electronics and software engineering, as well as from fields such as psychology. The aim is to use robotics, simulation modelling and haptics to provide practical solutions to real-world problems, and to commercialise their innovations.

One such innovation includes HaptiScan, a haptically enabled robotic ultrasound system that allows sonographers to perform scans remotely. The term “haptic” derives from the Greek haptikos, meaning the ability to touch or grasp. It is this sensation that Nahavandi has spent the past 30 years trying to capture in his work.

“When I delved into robotic research with tactile sensing in the 1990s, I realised that advances in haptic devices had remained stagnant because the technology had not expanded beyond single-point sensation and was limited to single finger, probe-like operations,” he said.

Nahavandi set about designing a solution, eventually developing the world’s first multi-point haptic control method. This breakthrough fundamentally changed haptic technology, allowing users to “feel” the attributes of virtual objects, such as roughness, hardness and elasticity, opening up a whole new world of augmented reality and VR experiences.

“Leveraging the multi-point haptics control invention, we’ve been able to develop a number of devices, including haptic steering wheels, helicopter control sticks, seats for motion simulators and grippers for medical simulators,” he said.

“To a member of the public, my work might look a bit revolutionary, but to me it’s incremental. I’m always thinking about where technology is going to be in the next 20 or 30 years.”

A human sense

Where Industry 4.0 promised a new industrial revolution born from an increase in automation, Nahavandi believes the future is a human-centric Industry 5.0, where robots work alongside humans.

“Industry 4.0 ignores the human cost of optimising and automating processes. With this new wave, it’s about bringing robots and humans together and getting the best out of both,” he said.

“Robots are becoming even more important, as they can be coupled with the human mind through brain–machine interfaces and advances in AI. Robots should work with humans as collaborators instead of competitors.”

Nahavandi is also passionate about the ethics of artificial intelligence and automation, citing a famous Stephen Hawking quote: “The rise of powerful AI will either be the best or the worst thing ever to happen to humanity. We do not yet know which.”

With this in mind, Nahavandi says the concern for engineers is to ensure ethical requirements are paramount in the design and implementation of intelligent, autonomous technologies. “We should never have a system where humans are out of the loop. We want our systems to receive their high-level commands from humans,” he said.

At the IISRI, PhD student Nicole Toomey is at the forefront of keeping humans in the loop. With her background in psychology, her work involves testing the physiological responses of users to the technology the team develops. “I look at things like brain activity in the frontal lobe and physiological things like heart rate and sweat response,” she said. “We want to make sure each of the projects will be well accepted by the user … Everyone has their own area of expertise and we all come together to create something really solid.”

After three decades, Nahavandi remains as passionate as ever about his work. “You know when you’re a child and it’s the night before Christmas, when you can’t sleep because you just want to open your presents and play with them? That’s how I’ve felt for most of my life,” he said. “I look forward to working; it’s like my hobby. I’m happiest when I’m involved in projects and working on things with my team.”

Wondering who actually takes responsibility for the actions in automation? Learn more about the ethical responsibilities of technology and automation with the Morality and Ethics in Automation on-demand training session with Engineers Australia.