Engineers have combined imaging, processing, machine learning and memory in one tiny electronic chip, powered by light.

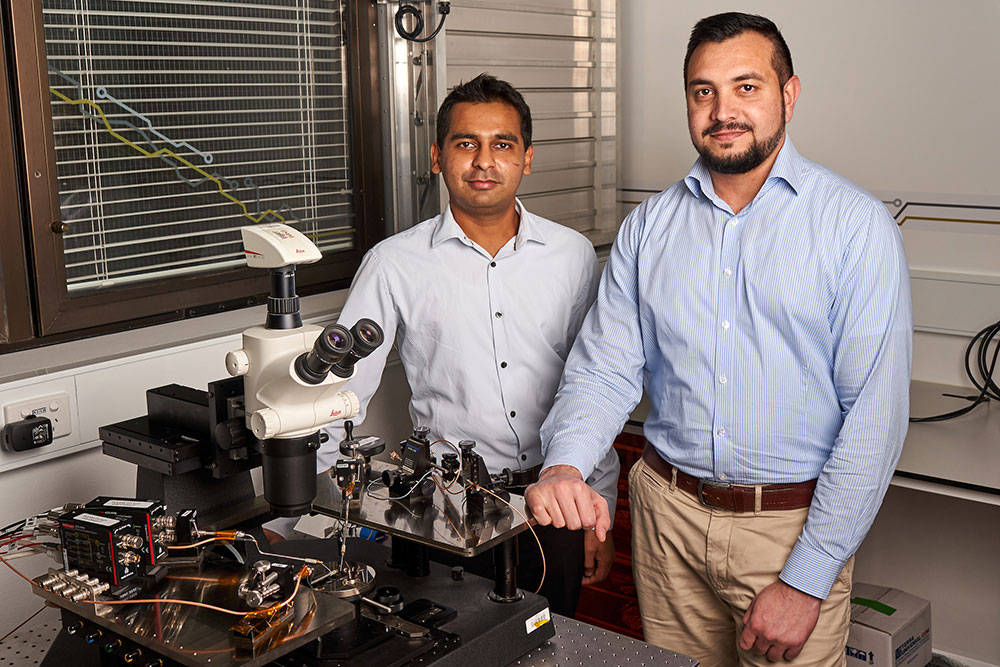

A team from from the Royal Melbourne Institute of Technology (RMIT) led the development of the single nanoscale chip, which shrinks artificial intelligence (AI) technology by imitating the way that the human brain processes visual information.

It combines the core software needed to drive AI with image-capturing hardware in a single electronic device.

This means the chip can capture and automatically enhance images, classify numbers and be trained to recognise patterns and images with an accuracy rate of more than 90 per cent.

“We have been working on developing brain-like devices for the past five years,” explained RMIT Associate Professor Sumeet Walia, who oversaw the project.

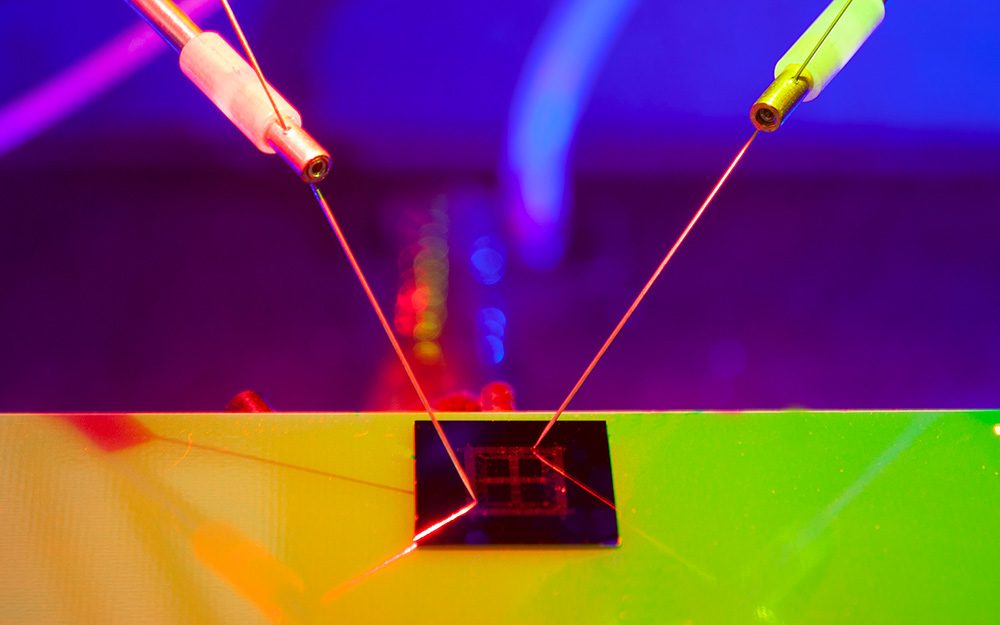

“About two years ago, we discovered the unique ability of black phosphorus to showcase a very peculiar electrical response when stimulated with different colours of light.”

Walia, who was one of create’s 2018 Most Innovative Engineers, said the prototype, developed in collaboration with peers in the United States and China, essentially turned a thought on its head and used it for something very exciting and otherwise impossible.

“This led us to conceptualising the idea of a fully light-operated device that can mimic how neurons in the brain connect — make memories — and disconnect, or break memories,” he said.

“The technology actually exploits defects or imperfections in the material which are normally looked upon as detriments.”

The breakthrough in neurobiotics is getting the researchers closer to an all-in-one AI device inspired by how the brain learns, through imprinting vision as memory.

How it works

“Imagine a dash cam in a car that’s integrated with our neuro-inspired hardware — this means it can recognise lights, signs, objects and make instant decisions, without having to connect to the internet,” Walia said.

“By bringing it all together into one chip, we can deliver unprecedented levels of efficiency and speed in autonomous and AI-driven decision-making.”

Conventional imaging and recognition systems demand extensive computational resources due to data storage, pre-processing and chip-to-chip communication.

“This is because separate chips are needed in combination with complicated optics to capture, store and process image information, and all this requires a series of iterative computing steps,” Walia explained.

“This results in high energy requirements and data latency. Our idea was, ‘what if we can mimic human vision that employs a highly efficient imaging and recognition process?’”

The prototype is inspired by optogenetics, an emerging tool in biotechnology that allows scientists to delve into the body’s electrical system with great precision and use light to manipulate neurons.

Walia said it is a further step in deploying devices to perform more complex operations similar to the human vision system.

“By capturing optical images, converting them into electrical data, like the eye and optical nerve, and subsequently recognising and storing this data, like the brain, via in-memory analog computing, our device provides a new pathway to developing machine vision in a standalone chip without any external processing,” he said.

Coming to machines near you

As for when we’ll see this technology in our lives, Walia believes it will be sooner than we probably imagine.

“Big industry players and governments are all getting onboard due to the massive benefit across sectors,” he said.

“We already have smart devices. With this technology, we will have self-learning hardware whose working principle is like the brain, which is the most energy efficient computer designed by nature.”

Combining so much functionality into one compact device means it could broaden the horizons for machine learning and AI to be integrated into smaller applications, said study lead author Dr Taimur Ahmed.

“Using our chip with artificial retinas, for example, would enable scientists to miniaturise that emerging technology and improve the accuracy of the bionic eye,” he said.

“Our prototype is a significant advance towards the ultimate in electronics: a brain-on-a-chip that can learn from its environment just like we do.”

In the long-term, large-scale implantable machine vision could be realised through the integration of such processors into technologies across healthcare, biomedical imaging, logistics, transport and forensics, among other areas.

“Scaling the technology up and developing thousands of devices on a single chip is the next step,” Walia added.

“There are further improvements in processing speed that are required but these will naturally be solved as more focused efforts are directed towards this technology.”