Last week Rethink Robotics, an early pioneer in the field of collaborative robots, announced it was shutting down operations. In light of this, create revisits a Q&A we did with the company’s co-founder and chief technology officer, Rodney Brooks, in 2015 – when they were still one of the few players in the game.

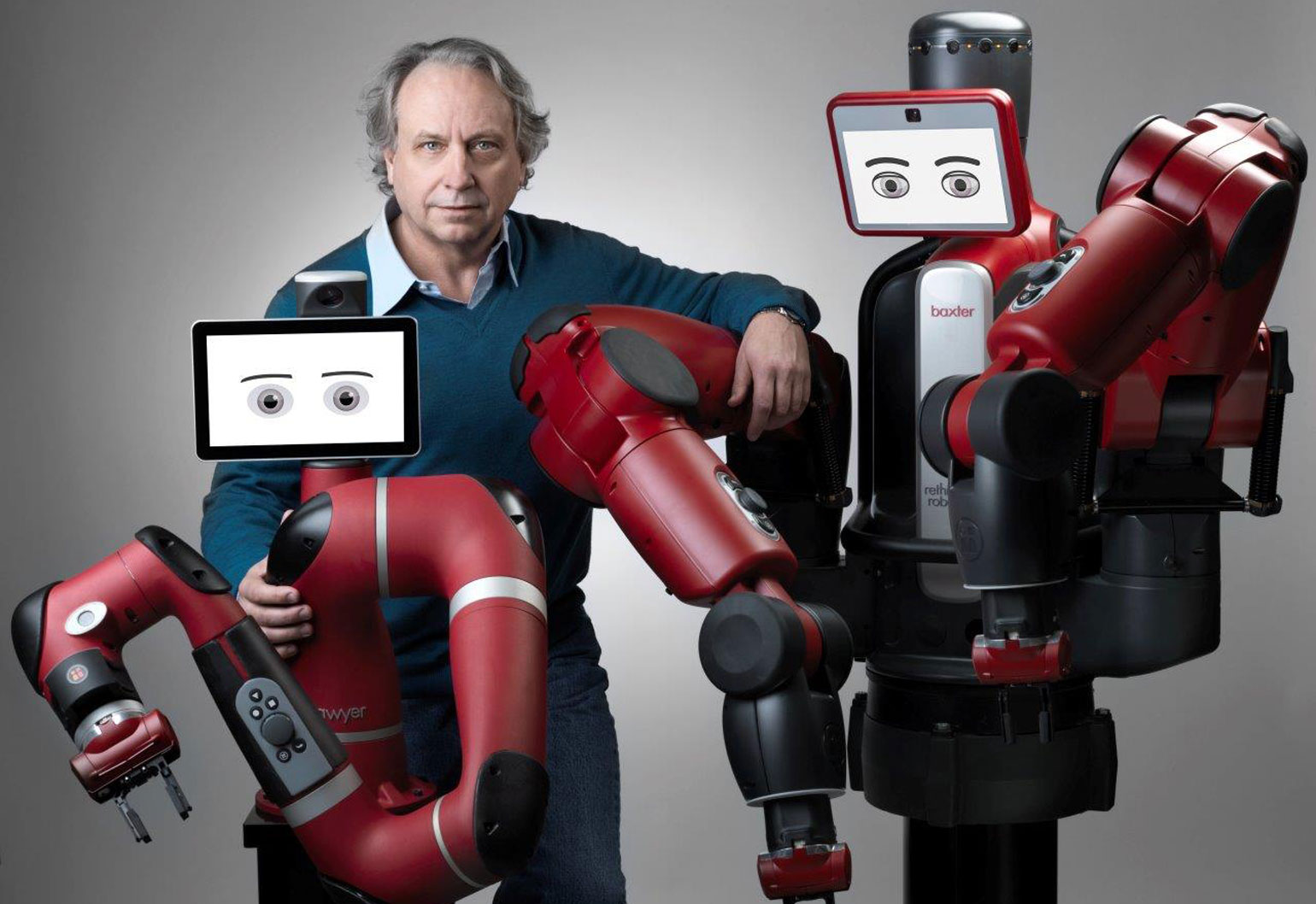

Rodney Brooks is often credited with transforming the world of robotics. The Adelaide-born MIT Professor Emeritus literally turned the world of artificial intelligence on its head by taking a bottom-up approach to building robots.

His subsumption architecture was inspired by mosquitoes – Brooks realised the insects could move effectively in their environment despite having very little brainpower.

He co-founded iRobot, a company perhaps best known for its Roomba vacuums, and

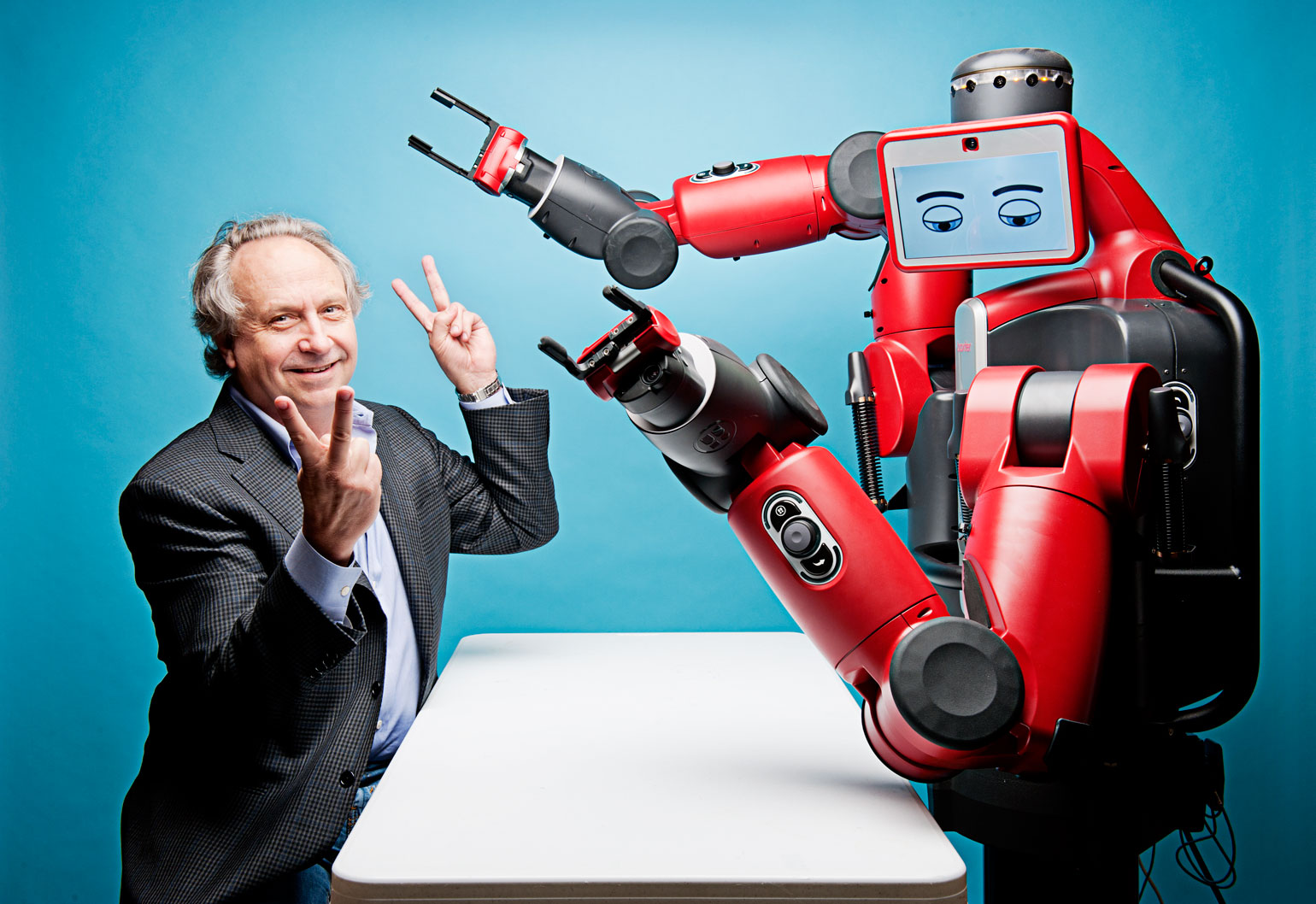

is currently chairman and chief technology officer of Rethink Robotics, which develops and manufactures collaborative robots.

Brooks talks about his creations, the complexities of behaviour-based programming, and why collaborative robots are the workhorses of the future.

create: Is it true you built your first robot when you were 12?

RODNEY BROOKS: Yes, and this is the first description of it I’ve ever had to give in my professional career! Essentially, I made it out of telephone switches from old office switchboards. They were four-pole, double-throw switches and had three positions so they could be set to empty, cross or nought.

You started off with all the switches in the middle (empty) position and when the person played nought, they pushed the switch towards the nought side and that would light up a nought bulb.

That’s where the machine wanted to play a cross so you pushed another switch to the cross side and played for the machine. Then it was up to the person to play a nought again and determine what was considered a line. There were no active elements in that machine.

create: When did you decide to pursue a career in robotics?

RB: I was always interested in robots and in my teen years, read a book by W. Grey Walter, The Living Brain. In that he described his robots, Elmer and Elsie. He had used vacuum valves and I built the transistor version of that in my late teens.

While I was at Flinders University as an undergraduate, I worked with Dr Jerry Kautsky. He let me and another student have the university’s only computer all to ourselves for 12 hours every Sunday. This was an IBM 1130 with 16 K of memory.

That’s where we learned everything about computer science – by having to figure out how to write operating systems and compilers and user interfaces all by ourselves.

create: What’s the story behind Allen, Herbert and Genghis?

RB: Well, those were the robots I built when I was on the faculty at MIT. The first was Allen – it had a ring of 12 sonars and it could operate in a dynamic environment. It could avoid people and dynamically alter itself in unstructured environments. It was really the first AI robot that could handle dynamic interaction with people.

The second robot, Herbert, used onboard processing and had 30 infrared sensors, a laser scanner, and a magnetic compass to locate soft drink cans and keep itself oriented as it wandered throughout the MIT Artificial Intelligence Laboratory. After collecting an empty can, Herbert would return it to a recycling bin.

Herbert had 24 simple microprocessors. You could switch any of them off and the system would still work as the processing was distributed. It just wouldn’t be as good at some things.

Then came Genghis, the insect-like walking machine with six legs and compound eyes. That was at a time when there were very few walking machines and they were very slow.

Genghis was a dynamic system, adapting to changing terrain and used a newer wheel inspired controller for the six legs and the wheel central coordination to get a stable gait. Genghis was on display at the Smithsonian because it had inspired work we went on to do later.

create: Does subsumption architecture form the basis of all collaborative robots today?

RB: Yes, they are similar at the bottom level. That’s what I’ve been calling this newly inspired architecture that was introduced in 1986 and became more explicit in Herbert.

It’s a bunch of simple finite state machines that interact more directly with sensors and actuators, and we use a variation of it in Baxter.

Over a period of 30 years it’s morphed into what is called behaviour-based programming. It isn’t necessarily represented by simple finite state machines anymore, but it’s an evolution from that.

create: Is that the basis of the Roomba autonomous vacuum cleaner?

RB: Yes, well that is explicitly based on behaviour-based programming. That was done at iRobot and we stepped into the market in September 2002. I believe about 14 million of them have been sold so far and we’re introducing about 2 million a year. It’s a pretty major product in that sense.

It has simple behaviours that let it clean the floor. When it finds dirt, a specific behaviour kicks in and it does a local search. It’s got various algorithms for getting itself unstuck. Another behaviour kicks in when its brushes are rolled in a power cord and it can unwind itself.

When its battery is getting low, it goes into search mode looking for the home beacon as a guiding behaviour kicks in and it comes in to dock. It’s got a whole set of behaviours that kick in at different times.

create: What inspired you to set up Rethink Robotics?

RB: During the manufacturing of Roomba in China in 2004, I saw early signs that we were not going to have an infinite supply of labour in China in the way that we all thought earlier.

Worker turnover of 20 per cent per year is not considered particularly bad. Imagine trying to run an organisation where you get 20 per cent turnover per month. This is what was happening with Chinese manufacturers and led to labour shortages.

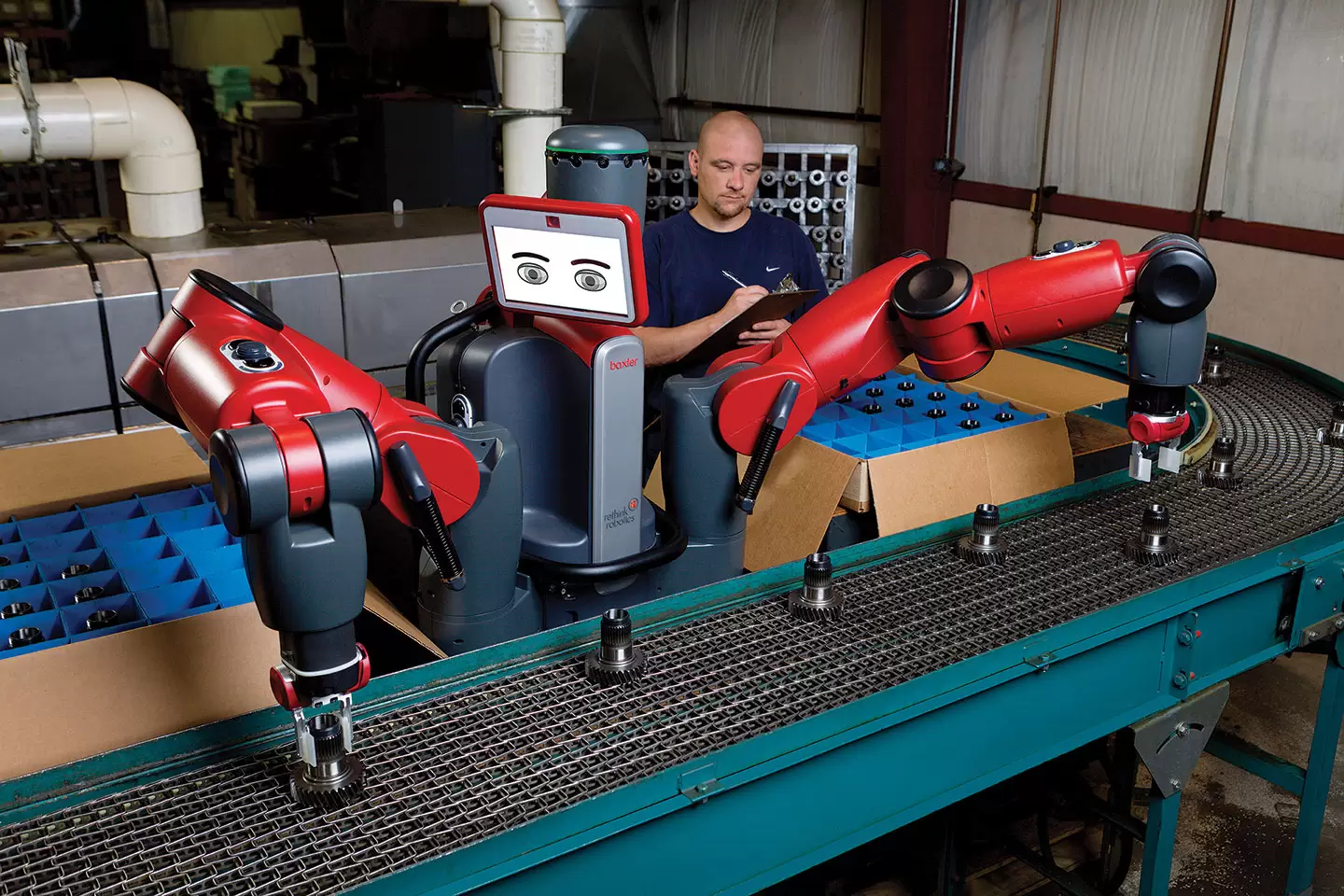

How could we provide robots to make up that difference? The old industrial robots were not going to cut it because getting them to work is such an intensive operation requiring extensive planning. This just does not work for SMEs.

The neat thing with Baxter and Sawyer is you just take a robot out of the box. It’s already got software. You show it how to do a task. You don’t need a consultant or need to know programming. All that is hidden from the users of the robot.

We say it knows what you mean and does what you want as you show it the task.

create: Are they based on the Intera platform?

RB: Most of our robots run on Intera. There are six levels of interactive – that’s where that name comes from.

Compared to our robots, industrial robots in general don’t normally come with much software. Intera comes with a whole software stack including intelligence, user interface and built-in vision.

create: What’s the best way to get robotics engineering from universities into the market?

RB: The market is a very different place and a very long distance from university research. In university, you do demonstrations with a team of PhD students running the demonstration.

In the real world, there are no experts around. People have to take the thing out of the box and it just has to work.

The end users aren’t concerned about what particular algorithm is running in the robot, whereas that’s what the researchers care about.

Researchers are looking for publication readiness. It’s a very different task. You go from final demonstration to something ready enough to get your first round of seed funding and you’ll need to factor in 10 times more work.

From seed funding to the first minimally viable product is another factor of 10 times more work. Then getting the actual product manufactured is another factor of 10 on top of that, and then the sales and marketing is yet another factor of 10.

What you did in your PhD was great stuff, but there is a factor of 10,000 times more work to get a robot to market. I’m not saying that academia is useless, quite the contrary. We need that, but it’s a very different activity.

create: What are the engineering challenges limiting the explosive growth of robotics right now?

RB: Its lack of manual dexterity. We haven’t had a lot of progress in 40 years in academia on that, although many people have been working on it.

Whenever I say that, someone will put up their hand and say, ‘But did you see the paper by such and such?’.

It’s very different to, say, SLAM. Professor Hugh Durrant-Whyte from Sydney University was one of the people who really made that work. [In robotics, simultaneous localisation and mapping (SLAM) is the computational problem of constructing or updating a map of an unknown environment while simultaneously keeping track of an agent’s location within it.]

That leap worked as there were a few thousand people working on it in a sustained way for 10 years. It got better and better and better, so Hugh’s 1991 paper with John Vial was a critical one.

It took another 10 years of hard effort to make it into a real-time practical algorithm. We haven’t had that sort of sustained work on manipulation.

The second biggest challenge is still the classical robotics problem of the bin of parts. Grasping individual objects from an unordered pile in a box is still a challenge.

People who are not roboticists or haven’t looked at computerisation are still surprised.

create: Do you buy into the fear that some people have regarding artificial intelligence and advances in robotics?

RB: There has been great progress but no robot has even 1 per cent of a smidgen of the sort of intelligence that a person has.

Some people say robots are getting exponentially better and will be smarter than humans by 2020 or 2025. And I say, “Not this century”.

People think I’m a grumpy old professor, which is fine. I guess I am a grumpy old professor, but I’ve seen the same arguments play out for the past 50 years. In five years from now, I guarantee you’re going to be seeing lots of articles in magazines saying, “What happened to the promise of AI?” Please, write that down and remember it.

create: One of your fears is that we don’t have enough robots. Why is that?

RB: That’s what I’m worried about because of the demographics in North America, Europe and now in China.

We’ve got labour problems because if you look at the number of 19-year olds, it’s dropping 30 per cent this decade. The ratio of older people to working age people is going up by a big factor.

Who is going to provide the services to people who are old? There are not going to be enough people to do that unless you have massive immigration, and many countries don’t favour this.

It has to be machines. In my current course, I show a 2014 Mercedes S class and I claim it’s an elder care robot. What I mean by that is it’s got so many driving safety features that a person who is older can drive more easily than they would be able to without those features in their car. That’s where I see robotics being helpful.

There’s recently been a flurry of announcements in terms of collaborative robots – ABB’s YuMi and UR3 from Universal Robotics. How is Baxter different?

They’re good robots, but they’re more from the industrial robot side. Obviously I think we’ve got a better product in Baxter and now Sawyer.

They’re not as sensitive to forces as our robot. They’re not as adaptable to the changing workspace as our robot. Our robots are based on programming – not in robot coordinates, but in task coordinates, whereas those other robots are programmed in robot coordinates.